Enter your details below to download the product catalogue.

A Practical Guide to Near-Infrared Cameras for Machine Vision Near-infrared, or NIR, cameras are becoming increasingly important in modern machine vision systems. While standard cameras operate in the visible light spectrum, NIR cameras are designed to capture wavelengths just beyond what the human eye can see. This capability allows them to reveal details that are otherwise hidden, making them valuable in industrial inspection, research, medical imaging, and automation. In many environments, visible light imaging is not enough. Materials may look similar in color but behave very differently under near-infrared light. Certain defects, moisture content, or contaminants may not be visible in the standard spectrum. NIR cameras solve this problem by providing enhanced contrast and deeper insight into materials and processes. What Is Near-Infrared Imaging Near-infrared light sits just beyond the visible spectrum, typically between 700 nm and 1000 nm, although some sensors can detect even further into the infrared range. Human eyes cannot detect this light, but specially designed sensors can capture it and convert it into a usable image. In a typical machine vision setup, a NIR camera is paired with a light source that emits near-infrared wavelengths. The…

Applications of High-Speed Cameras: From Industrial Testing to Cinematic Storytelling High-speed cameras have become essential tools across science, engineering, manufacturing, and even entertainment. By capturing events that happen in microseconds, they reveal details too fast for the human eye or conventional video equipment. Whether it is understanding how a product breaks during a drop test, analyzing complex fluid patterns, or producing cinematic slow motion for advertisements, high-speed imaging opens a window into hidden worlds. In this blog post, we explore the major applications of high-speed cameras across industries, with examples ranging from crash tests and ballistics to content creation and biomechanics. These use cases highlight how powerful high-speed imaging has become for innovation, problem solving, and storytelling. 1. Product and Material Testing One of the most common uses of high-speed cameras is in product and material testing. Understanding how materials behave under stress, impact, or motion helps engineers improve safety and durability. Drop Testing Companies conduct drop tests on everything from smartphones and appliances to packaging and protective gear. A high-speed camera captures the exact moment of impact and how shock waves travel through the product. Engineers can identify weak…

What Are High Speed Cameras: A Comprehensive Guide If you have ever wondered how filmmakers capture a bullet piercing glass, how engineers study car crash impacts, or how scientists analyse the motion of a hummingbird’s wings, the answer lies in high speed cameras. These specialized imaging systems record motion at thousands or even millions of frames per second, revealing details too fast for the human eye or a normal camera to detect. In this guide, we break down what high speed cameras are, how they work, and the key technologies that power them. What Is a High Speed Camera? A high speed camera is designed to capture video at extremely high frame rates far beyond the 24 to 60 fps recorded by most consumer or professional video cameras. Depending on the model, a high speed camera may capture anywhere from 1,000 fps to more than 1,000,000 fps. At these speeds, events that normally occur in an instant such as a droplet hitting a surface, an airbag deploying, or a manufacturing defect can be slowed down and analyzed in great detail. This makes high speed cameras indispensable in research, engineering, production,…

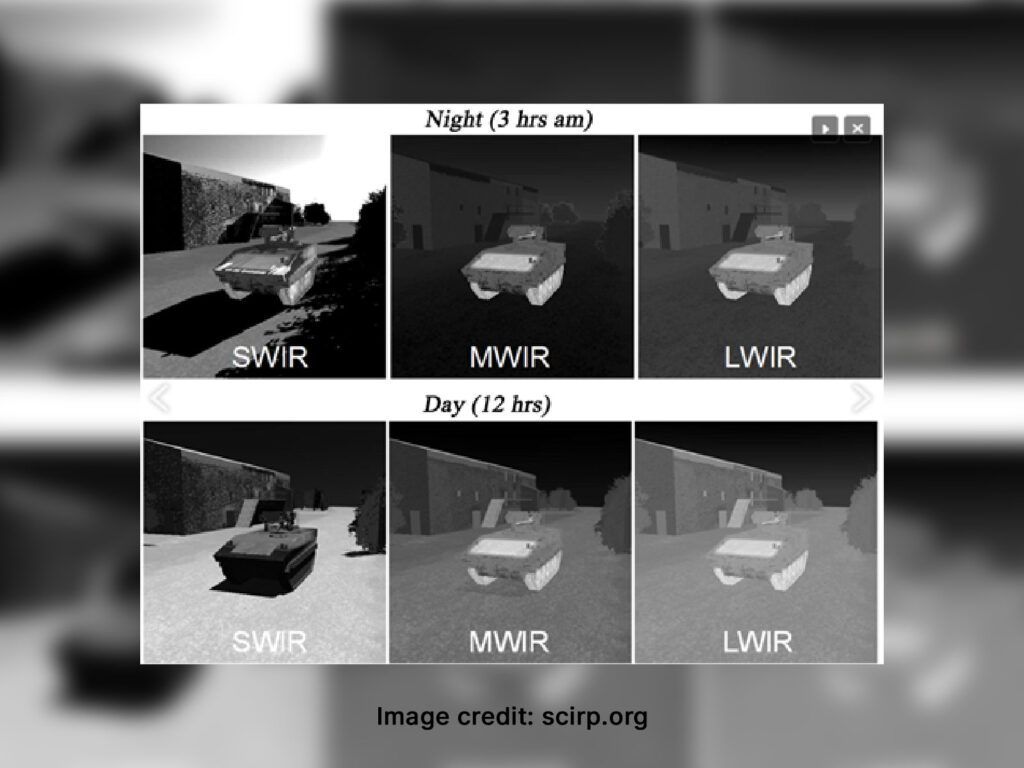

SWIR vs MWIR vs LWIR Cameras: Tech Specs Comparison & Applications Infrared imaging is now an essential part of modern machine vision, industrial monitoring, defence systems, and surveillance. But while most people refer to “thermal cameras” broadly, the reality is that infrared imaging spans multiple wavelength bands, each with different behaviours, advantages, and limitations. The three most widely used infrared categories are SWIR (Short-Wave Infrared), MWIR (Mid-Wave Infrared) and LWIR (Long-Wave Infrared). Understanding how these technologies differ can help you make the right choice for your project, whether it’s inspection, temperature measurement, environmental monitoring or security. Understanding the Infrared Spectrum Infrared light sits just beyond the visible spectrum, but it covers several atmospheric transmission windows. These windows influence how deeply each wavelength can penetrate fog, dust, glass, silicon or moisture. SWIR: 0.9 to 1.7 μm (sometimes up to 2.5 μm) MWIR: 3 to 5 μm LWIR: 8 to 14 μm Each region behaves differently. SWIR relies on reflective imaging similar to visible light. MWIR and LWIR detect emitted heat, but MWIR is more sensitive to fast temperature changes, while LWIR excels in general thermal detection. How Each Camera Type Works…

How to Select the Right LWIR Camera for Surveillance Applications Long-Wave Infrared (LWIR) cameras have become essential in modern surveillance because they detect heat instead of relying on visible light. Operating in the 8 to 14 µm range, they work in complete darkness and through challenging conditions such as fog, haze, smoke, and glare. Their passive operation makes them dependable for round-the-clock monitoring across industrial sites, borders, perimeters, and critical infrastructure. Selecting the right LWIR camera requires understanding several technical parameters, especially when the application demands reliable detection and identification. Understanding LWIR Technology LWIR cameras use uncooled microbolometer sensors that measure temperature differences in a scene and convert them into thermal images. Since all objects emit thermal radiation, LWIR cameras function independently of lighting conditions. This advantage makes them ideal for environments where visible cameras fail, such as at night, during storms, or in visually obstructed locations. LWIR imaging supports consistent surveillance across day and night cycles without reliance on artificial illumination. Evaluating Key LWIR Camera Specifications Selecting an LWIR camera requires evaluating its sensor attributes, sensitivity, image processing capabilities, and output interface. Each factor directly affects performance, clarity, and…

Long-Range Ground-Based Surveillance Systems: Key Technologies Explained In today’s world, securing wide and open areas such as borders, coastlines, airports, and energy facilities is more challenging than ever. Threats can emerge at long distances, often before they are visible to the naked eye. To stay ahead, organizations are increasingly relying on long-range ground-based surveillance systems, integrated solutions that bring together multiple technologies to provide continuous, accurate monitoring day and night, in all weather conditions. At the heart of these systems are five critical components: electro-optical (EO) cameras, infrared (IR) cameras, laser range finders (LRF), radar systems, and pan-tilt positioners. Each plays a unique role, and together, they create a powerful multi-sensor surveillance solution. Why Long-Range Surveillance Matters Wide-area surveillance is never simple. A single border fence can stretch hundreds of kilometres, a coastal area might face harsh fog, salt spray, and unpredictable weather. Relying on one type of sensor is not enough, cameras may struggle at night, radar may have difficulty identifying objects, and lasers alone cannot scan large areas. That’s why long-range systems integrate complementary technologies, ensuring early detection, clear identification, and precise tracking. This multi-sensor approach allows security…

A Guide to Pan-Tilt Positioners: Features, Selection, and Use Cases In industries where precision monitoring and remote control are critical, pan-tilt positioners play a vital role. From defence surveillance and border security to industrial and offshore monitoring, these systems provide smooth, accurate control of cameras, sensors, and antennas. By enabling wide coverage, flexible movement, and reliable positioning, pan-tilt positioners help operators make better decisions in real time. This guide will break down what pan-tilt positioners are, their key specifications, how to select the right one, and the industries where they are most widely used. What is a Pan-Tilt Positioner? A pan-tilt positioner (PTP) is a mechanical device designed to control the orientation of equipment, usually cameras, sensors, or antennas. Pan refers to horizontal rotation (side to side). Tilt refers to vertical movement (up and down). Unlike consumer-grade pan-tilt camera heads, industrial-grade positioners are built to handle higher payloads, extreme environments, and continuous operation. They are widely used in security, defence, marine, and industrial automation systems. Key Features and Specifications of Pan-Tilt Positioners When evaluating pan-tilt positioners, engineers and system integrators usually focus on the following parameters: 1. Pan Speed &…

Industrial High-Speed Cameras: Key Technologies, Interfaces, and Applications In the world of industrial automation, quality control, and scientific research, seeing what the naked eye can’t is often the key to precision and progress. That’s where high-speed cameras come in. These powerful imaging tools are designed to capture thousands of frames per second, revealing split-second events in stunning detail. But not all high-speed cameras are created equal, and not every interface can handle the data they generate. This article explores what makes a camera high-speed, the interfaces that power them, and how technologies like GigE Vision fit into the picture. What Makes a Camera ‘High-Speed’? A high-speed camera is typically defined by its ability to capture hundreds or even thousands of frames per second (fps). But frame rate alone doesn’t tell the whole story. Here are the core components that determine a camera’s high-speed capabilities: 1. Frame Rate Entry-level high-speed cameras may start around 250 fps, while advanced models can exceed 1,000,000 fps. 2. Shutter Speed To freeze motion without blur, exposure times often need to be in the microsecond range. High speed cameras have short exposure mode to support the…

How to Choose the Right Weld Camera: A Buyer’s Guide for Industrial Applications In today’s fast-paced manufacturing and fabrication environments, weld cameras have become essential tools for improving weld quality, enhancing operator safety, and enabling process automation. From shipbuilding yards to robotic welding lines in automotive plants, choosing the right weld camera can directly impact productivity and inspection accuracy. But with so many options available, how do you determine which weld camera fits your specific needs? In this guide, we’ll walk you through the key factors to consider when selecting a weld camera and how to match the right model to your application. 1. Understand the Welding Process The first step in choosing a weld camera is identifying the type of welding process used in your operation. Different processes produce varying light intensities, smoke levels, and thermal signatures. MIG (Metal Inert Gas): Produces a bright arc and high spatter; requires high dynamic range (HDR) imaging. TIG (Tungsten Inert Gas): Cleaner process but still demands fine image clarity. Laser Welding: Needs precise focus and fast response due to high energy density. Submerged Arc or Plasma Arc: May need thermal or infrared…

Frame-Based Imaging vs. Event-Based Imaging: What’s the Difference and Why It Matters In the rapidly evolving world of computer vision and machine learning, imaging systems play a central role across industries, from robotics to medical devices. Traditional imaging has long relied on frame-based approaches, but a newer alternative, event-based imaging, is gaining traction due to its speed and efficiency. Understanding the differences between these two paradigms can help engineers and researchers select the right technology for their needs. What is Frame-Based Imaging? Frame-based imaging refers to the conventional method of capturing visual data in the form of full frames at regular intervals. Each frame captures the entire scene at once, regardless of whether any part of the image has changed since the last capture. This method is common in most digital cameras and uses CMOS or CCD sensors to generate colour or grayscale images. This system is well-suited to static or predictable environments, where capturing the full image at consistent intervals provides enough information. However, it can be inefficient in dynamic scenes, where much of the data in each frame may remain unchanged. Additionally, frame-based systems can suffer from motion…